Technical Paper Title: IMAGE PROCESSING LANE DEPARTURE SYSTEM WITH EDGE DETECTION TECHNIQUE USING HOUGH TRANSFORM

Authors: A.RAJYA LAKSHMI & J. MOUNIKA, 2nd BTech, ECE

College: Prakasam Engineering College, Kandukur

ABSTRACT

One of the famous quotation goes like this“Safety is a cheap and effective insurance policy”

Safety in automotives is one of the important considerations under limelight. So many efforts are also taken in this regard. Dozens of processors control every performance aspect of today’s automobiles.

While most currently available safety features utilize a wide array of sensors—principally involving microwaves, infrared light, lasers, accelerometers, or position detection—for safety applications such as front and rear warning, lane detection warning, automatic emergency braking etc.

In this paper a novel attempt has been taken to revolutionize the safety in automotive systems with the help of image processing, which aids the driver in efficient driving.

The safety feature considered is x. A high speed camera scans the image of the road scene ahead at regular instants. These images are moved to memory for further processing. Later these images go through EDGE DETECTION TECHNIQUES. This edge detection technique consists of our new algorithm which detects the lanes on the road. Then after using HOUGH TRANSFORM these lines are detected and compared with the current car position. This helps the system in placing a constant vigil on the car over its position in the lane.

This concept also aids in future revolution of automatic driving in highways. Moreover the use of high speed processors like Blackfinn processors makes it very simple to implement this system in real time.

1. VIDEO IN AUTOMOTIVE SAFETY SYSTEMS

In many ways, car safety can be greatly enhanced by video-based systems that use high-performance media processors. Because short response times are critical to saving lives, however, image processing and video filtering must be done deterministically in real time. There is a natural tendency to use the highest video frame rates and resolution that a processor can handle for a given application, since this provides the best data for decision making. In addition, the processor needs to compare vehicle speeds and relative vehicle-object distances against desired conditions—again in real time. Furthermore, the processor must interact with many vehicle subsystems (such as the engine, braking, steering, and airbag controllers), process sensor information from all these systems, and provide appropriate audiovisual output to the driver. Finally, the processor should be able to interface to navigation and telecommunication systems to react to and log malfunctions, accidents, and other problems.

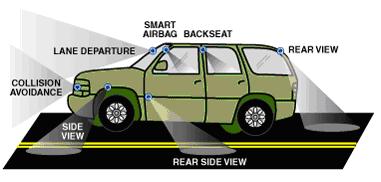

Fig 1: Basic camera placement regions for automotive applications

Figure 1 shows the basic video operational elements of an automotive safety system, indicating where image sensors might be placed throughout a vehicle, and how a lane departure system might be integrated into the chassis. There are a few things worth noting. First, multiple sensors can be shared by different automotive safety functions. For example, the rear-facing sensors can be used when the vehicle is backing up, as well as to track lanes as the vehicle moves forward. In addition, the lane-departure system might accept feeds from any of a number of camera sources, choosing the appropriate inputs for a given situation. In a basic system, a video stream feeds its data to the embedded processor. In more advanced systems, the processor receives other sensor information, such as position data from GPS receivers.

2. LANE DEPARTURE—A SYSTEM EXAMPLE

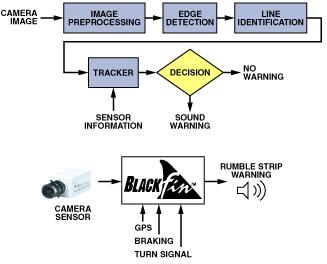

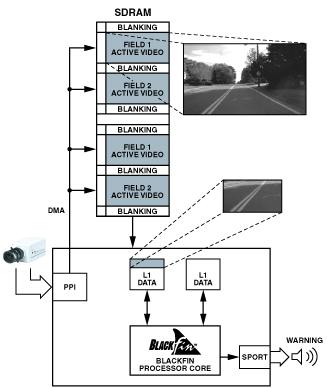

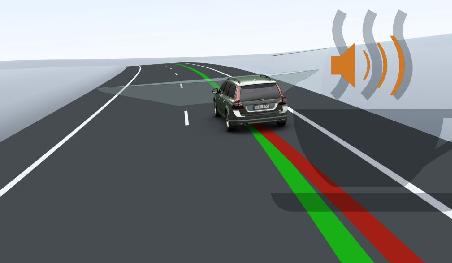

The overall system diagram of Figure 2 is fairly straightforward, considering the complexity of the signal processing functions being performed. Interestingly, in a video-based lane departure system, the bulk of the processing is image-based, and is carried out within a signal processor rather than by an analog signal chain. This represents a big savings on the system bill-of-materials. The output to the driver consists of a warning to correct the car’s projected path before the vehicle leaves the lane unintentionally. It may be an audible “rumble-strip” sound, a programmed chime, or a voice message.

Figure 2. Basic steps in a lane- departure algorithm and how the processor might connect to the outside world.

The video input system to the embedded processor must perform reliably in a harsh environment, including wide and drastic temperature shifts and changing road conditions. As the data stream enters the processor, it is transformed—in real time—into a form that can be processed to output a decision. At the simplest level, the lane departure system looks for the vehicle’s position with respect to the lane markings in the road. To the processor, this means the incoming stream of road imagery must be transformed into a series of lines that delineate the road surface.

The processor can find lines within a field of data by looking for edges. These edges form the boundaries within which the driver should keep the vehicle while it is moving forward. The processor must track these line markers and determine whether to notify the driver of irregularities.

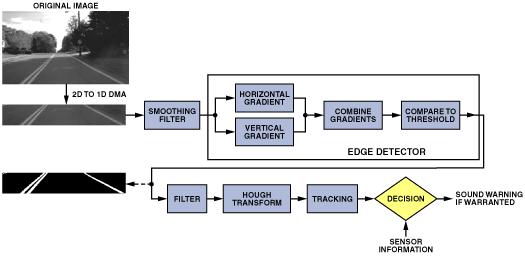

Figure 3. Algorithm flow, showing results of intermediate image-processing steps

Let’s now drill deeper into the basic components of the lane-departure system example. Figure 3 follows the same basic operational flow as Figure 2 but with more insight into the algorithms being performed. The video stream coming into the system needs to be filtered and smoothed to reduce noise caused by temperature, motion, and electromagnetic interference. Without this step, it would be difficult to find clean lane markings.

The next processing step involves edge detection; if the system is set up properly, the edges found will represent the lane markings. These lines must then be matched to the direction and position of the vehicle. The Hough transform will be used for this step. Its output will be tracked across frames of images, and a decision will be made based on all the compiled information. The final challenge is to send a warning in a timely manner without sounding false alarms.

2.1. IMAGE ACQUISITION

An important feature of the processor is its parallel peripheral interface (PPI), which is designed to handle incoming and outgoing video streams. The PPI connects without external logic to a wide variety of video converters.

For automotive safety applications, image resolutions typically range from VGA (640 × 480 pixels/image) down to QVGA (320 × 240 pixels/image). Regardless of the actual image size, the format of the data transferred remains the same—but lower clock speeds can be used when less data is transferred. Moreover, in the most basic lane-departure warning systems, only gray-scale images are required. The data bandwidth is therefore halved (from 16 bits/pixel to 8 bits/pixel) because chroma information can be ignored.

2.2. MEMORY AND DATA MOVEMENT

Efficient memory usage is an important consideration for system designers because external memories are expensive, and their access times can have high latencies. While Blackfin processors have an on-chip SDRAM controller to support the cost-effective addition of larger, off-chip memories, it is still important to be judicious in transferring only the video data needed for the application. By intelligently decoding ITU-R 656 preamble codes, the PPI can aid this “data-filtering” operation. For example, in some applications, only the active video fields are required. In other words, horizontal and vertical blanking data can be ignored and not transferred into memory, resulting in up to a 25% reduction in the amount of data brought into the system. What’s more, this lower data rate helps conserve bandwidth on the internal and external data buses.

Because video data rates are very demanding, frame buffers must be set up in external memory, as shown in Figure 4.

Figure 4. Use of external memory for a frame buffer.

In this scenario, while the processor operates on one buffer, a second buffer is being filled by the PPI via a DMA transfer. A simple semaphore can be set up to maintain synchronization between the frames. With Blackfin’s flexible DMA controller, an interrupt can be generated at virtually any point in the memory fill process, but it is typically configured to occur at the end of each video line or frame.

Once a complete frame is in SDRAM, the data is normally transferred into internal L1 data memory so that the core can access it with single-cycle latency. To do this, the DMA controller can use two-dimensional transfers to bring in pixel blocks. Figure 5 shows an example of how a 16 × 16 “macroblock,” a construct used in many compression algorithms, can be stored linearly in L1 memory via a 2D DMA engine.

Figure 5. A 2D to 1D DMA transfer from SDRAM into L1 memory.

To efficiently navigate through a source image, four parameters need to be controlled: X Count, Y Count, X Modify, and Y Modify. X and Y Counts describe the number of elements to read in/out in the “horizontal” and “vertical” directions, respectively. Horizontal and vertical are abstract terms in this application because the image data is actually stored linearly in external memory. X and Y Modify vaues achieve this abstraction by specifying an amount to “stride” through the data after the requisite X Count or Y Count has been transferred.

2.3 IMAGE FILTERING

Before doing any type of edge detection, it is important to filter the image to smooth out any noise picked up during image capture. This is essential because noise introduced into an edge detector can result in false edges output from the detector.

Obviously, an image filter needs to operate fast enough to keep up with the succession of input images. Thus, it is imperative that image filter kernels be optimized for execution in the fewest possible number of processor cycles.

3.EDGE DETECTION METHOD:

3.1 DETAILS OF THE METHODOLOGY

In this section, we first estimate the image surface to gray scale image. The specific converter used in this scheme is

M N

G(x,y)=Σ Σ r * 0.3 + g * 0.59 + b * 0.11

i=1 j=1

Where g(x,y) represents gray value of x,y.

Where M,N represents row and column.

Where r,g,b represents red,green,blue.

Now the image were converted in to grayscale image, so all pixel in that image holds gray value.

Our algorithm detects an edge by using neighborhood pixels, so in finding an neighborhood pixel by this following two cases.

1) Compare pixel with top pixel.

2) Compare pixel with left pixel.

3.2 ALGORITHM FOR THE PROPOSED METHODOLOGY

An algorithm for the proposed methodology is described as follows.

1) Input the Bitmapped image Iinp of size M x N ,Input the Threshold value in T.

2) Convert the bitmapped image into gray scaled image using (1) G(X,Y)

Case 1:

3) Get the color of First pixel and left most pixel, calculate the difference between those pixels and stored in CO.

4) Check with threshold value, and according to that value make it to white or black

Algorithm 1.1

For i=1 to m

For j=1 to n

First=F(x,y)

Left=F(x,y-1)

CO=First-Left

If CO>T 3 then

COLOR=WHITE

Else

COLOR=BLACK

If COLOR=BLACK then Track Edge

Case 2:

5) Get the color of First pixel and top most pixel, calculate the difference between those pixel and stored in CO.

6) Check with threshold value, and according to that value make it to white or black

Algorithm 1.2

For i=1 to m

For j=1 to n

First=F(x,y)

Top=F(x-1,y)

CO=First-Top

If CO>T3 then

COLOR=WHITE

Else

COLOR=BLACK

If COLOR=BLACK then

Track Edge

Track Edge:

If COLOR=BLACK then

Set pixel as black

Else Set pixel as white

3.3 SOME EDGE MAPS

This section shows the edge maps obtained after implementing the proposed algorithm on Lena image and an artificially created image of smoothed concentric circles, Text images. The three regions of the concentric circles are filled with three different gray level Values.In our algorithm threshold value is common value and it is not changed for each picture.

The edge maps produced various algorithms were all full of many spurious edges.

However, the new algorithm remained consistent by providing visibly clean results at default values ,and for the input parameters

4. STRAIGHT LINE DETECTION—HOUGH TRANSFORM

The Hough transform is a widely used method for finding global patterns such as lines, circles, and ellipses in an imageby localizing them in a parameterized space. It is especially useful in lane detection because lines can be easily detected as points in Hough transform space, based on the polar representation of Equation 1:

![]() ……………………. (1)

……………………. (1)

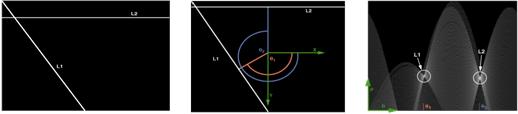

The meaning of this equation can be visualized by extending a perpendicular from the given line to the origin, such that θ is the angle that the perpendicular makes with the abscissa and θ is the length of the perpendicular. Thus, one pair of coordinates (ρ, θ) can fully describe the line. Lines L1 and L2 in Figure a demonstrate this concept.

Figure b shows that L1 is defined by θ1 and the length of the red perpendicular, while L2 is defined by θ2 and the length of the blue perpendicular line.

Figure (a.) Figure (b). Figure(c)

Figure c. shows the Hough transform of the image in Figure a. The range for θ is [0, 2π], and the range for ρ is one-half the diagonal of the input image in Figure a. The two bright regions correspond to local maxima, which can be used to reconstruct the two lines in Figure a.

Another way to look at the Hough Transform is to consider a way that the algorithm could be implemented intuitively:

1. Visit only white pixels in the binary image.

2. For each pixel and every θ value being considered, draw a line through the pixel at angle θ from the origin. Then calculate ρ, which is the length of the perpendicular between the origin and the line under consideration.

3. Record this (ρ, θ) pair in an accumulation table.

4. Repeat steps 1–3 for every white pixel in the image.

5. Search the accumulation table for the (ρ, θ) pairs encountered most often.

These pairs describe the most probable “lines” in the input image, because in order to register a high accumulation value, there had to be many white pixels that existed along the line described by the (ρ, θ) pair.

The Hough transform is computationally intensive because a sinusoidal curve is calculated for each pixel in the input image. However, certain techniques can speed up the computation considerably.

First, some of the computation terms can be computed ahead of time, so that they can be referenced quickly through a lookup table. In Blackfin’s fixed-point architecture it is very useful to store the lookup table only for the cosine function. Since the sine values are 90 degrees out of phase with the cosines, the same table can be used, with an offset. With the lookup tables in use, the computation of Equation (1) can be represented as two fixed-point multiplications and one addition.

Another factor that can improve performance is a set of assumptions about the nature and location of lane markings within the input image. By considering only those input points that could potentially be lane markings, a large number of unnecessary calculations can be avoided, since only a narrow range of θ values need be considered for each white pixel.

The output of a Hough Transform is a set of straight lines that could potentially be lane markings. Certain parameters of these lines can be calculated by simple geometric equations. Among the parameters useful for further analysis are the offset from the camera’s center axis, the widths of the detected lines, and the angles with respect to the position of the camera. Since lane markings in many highway systems are standardized, a set of rules can eliminate some lines from the list of lane-marking candidates. The set of possible lane-marking variables can then be used to derive the position of the car.

4.2 LANE TRACKING

Lane information can be determined from a variety of possible sources within an automobile. This information can be combined with measurements of vehicle-related parameters (e.g., velocity, acceleration, etc.) to assist in lane tracking. Based on the results of these measurements, the lane-departure system can make an intelligent decision as to whether an unintentional departure is in progress. In advanced systems, other factors could be modeled, such as the time of day, road conditions, and driver alertness. The problem of estimating lane geometry is a challenge that often calls for using a Kalman filter to estimate the road curvature. Specifically, the Kalman filter can predict future road information—which can then be used in the next frame to reduce the computational load presented by the Hough transform.

As described earlier, the Hough transform is used to find lines in each image. But these lines also need to be tracked over a series of images. In general, a Kalman filter can be described as a recursive filter that estimates the future state of an object. In this case, the object is a line. The state of the line is based on its location and its motion path across several frames.Along with the road state itself, the Kalman filter provides a variance for each state. The predicted state and the variance can be used in conjunction to narrow the search space of the Hough transform in future frames, which saves processing cycles.

4.3 DECISION MAKING-CURRENT CAR POSITION OR TIME TO LANECROSSING

From our experience, we know that false positives are always undesirable. There is no quicker way to get a consumer to disable an optional safety feature than to have it indicate a problem that does not exist.

With a processing framework in place, system designers can add their own intellectual property (IP) to the decision phase of each of the processing threads. The simplest approach might be to take into account other vehicle attributes when making a decision. For example, a lane-change warning could be suppressed when a lane change is perceived to be intentional—as when a blinker is used or when the brake is applied. More complex systems may factor in GPS coordinate data, occupant driving profile, time of day, weather, and other parameters.

CONCLUSION

In the foregoing discussion, we have described an example of how a vehicle could be separated in a lane using edge detection technique based upon hough transform. The point we have sought to establish is that when a flexible media processor is available for the design, there is plenty of room to consider feature additions and algorithm optimizations. The idea what we have implemented is for the edge detection algorithm.This is much more efficient than the other algorithms which have been proposed for the image based lane departure system in terms of clarity and preciseness in the image.

REFERENCE:

- Computer vision and Image Processing, Scott E Unbaugh, Ph.D.

- Image Processing Analysis and Machine Vision, Milan Sonka.s