Technical Paper Title: HAPTIC TECHNOLOGY

Authors:Daksha Yemala & Devulapalli Visishtha, 2nd Year BTech, Electronic & Telematics(ETM)

College: G.Narayanamma Institute of Technology and science(women), Shaikpet, Hyderabad

ABSTRACT

Haptics is the science of applying touch (tactile) sensation and control to interact with computer applications. The word is derived from the Greek word “haptein” meaning “to fasten.”

Haptic technology is like exploring the virtual world with a stick. The computer communicates sensations through a haptic interface –a stick, scalpel, racket or pen that is connected to a force-exerting motor. In combination with a visual display, haptics technology can be used to train people for tasks requiring hand-eye coordination, such as surgery and space ship maneuvers.

In this paper we explicate how haptic technology works and its significance. Then, we move on to a few applications of Haptic Technology and its advantages and disadvantages. Finally we conclude by mentioning a few future developments.

INTRODUCTION

Haptics refers to sensing and manipulation through touch. By using special input/output devices (joysticks, data gloves, or other devices), users can receive feedback from computer applications in the form of felt sensations in the hand or other parts of the body. In combination with a visual display, haptics technology can be used to train people for tasks requiring hand-eye coordination, such as surgery and space ship maneuvers. It can also be used for games in which you feel as well as see your interactions with images. For example, you might play tennis with another computer user somewhere else in the world. Both of you can see the moving ball and, using the haptic device, position and swing your tennis racket and feel the impact of the ball.

Haptic technologies provide force feedback to users about the physical properties and movements of virtual objects represented by a computer. A haptic joystick, for example, offers dynamic resistance to the user based on the actions of a video game. Historically, human-computer interaction has been visual-words, data, or images on a screen. Input devices such as the keyboard or the mouse translate human movements into actions on the screen but provide no feedback to the user about those actions. Haptics incorporates both touch (tactile) and motion (kinesthetic) elements. For applications that simulate real physical properties—such as weight, momentum, friction, texture, or resistance—haptics communicates those properties through interfaces that let users “feel” what is happening on the screen.

How Does Haptics Work?

Typically, an Immersion haptics system includes

- Sensor(s)

- Actuator (motor) control circuitry

- One or more actuators that either vibrate or exert force

- Real-time algorithms (actuator control software, which we call a “player”) and a haptic effect library

- Application programming interface (API), and often a haptic effect authoring tool

The Immersion API is used to program calls to the actuator into your product’s operating system (OS). The calls specify which effect in the haptic effect library to play.

When the user interacts with your product’s buttons, touch screen, lever, joystick/wheel, or other control, this control-position information is sent to the OS, which then sends the play command through the control circuitry to the actuator.

Haptics applications use specialized hardware to provide sensory feedback that simulates physical properties and forces.

Haptic interfaces can take many forms; a common configuration uses separate mechanical linkages to connect a person’s fingers to a computer interface. When the user moves his fingers, sensors translate those motions into actions on a screen, and motors transmit feedback through the linkages to the user’s fingers. The screen might show a ball, for example, and by manipulating a virtual hand through the device, the user can “feel” the ball, discerning how much it weighs or the texture of its surface. Because the ball and its environment are purely virtual, the properties can be changed—adding more air to an under inflated ball to make it less squishy, or altering the amount of gravity to let users feel how much the ball would weigh on the moon.

This way, your product’s haptic effects are context-appropriate, making operation engaging, intuitive, and natural.

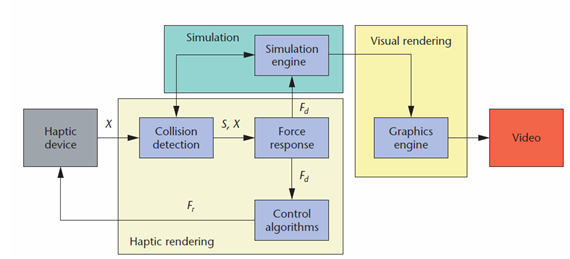

“The actual process used by the software to perform its calculations is called haptic rendering.”

We split haptic rendering into three main blocks. Collision-detection algorithms provide information about contacts S occurring between an avatar at position X and objects in the virtual environment. Force-response algorithms return the ideal interaction force Fr between avatar and virtual objects. Control algorithms return a force Fr to the

user approximating the ideal interaction force to the best of the device’s capabilities.

APPLICATIONS

1) FOR THE VISUALLY IMPAIRED

Today, Braille displays are very expensive, even though they are confined to text and lack integral touch-screens. The haptic display device, on the other hand, will include an integrated touch-screen so that users can push on areas of the screen to activate menus and other graphical icons that they feel there. With the ability to display graphical images and activate them by touch, the wide world of graphical information displays available on computers today can finally be accessed by the blind. . A multimodal tool allows blind people to create virtual graphs independently. Multimodal interactions in the process of graph creation and exploration are provided by using a low-cost haptic device. Haptic technology can be incorporated into touchable maps for the blind. To create a map, a video is shot of a real-world location, either an architectural model of a building or a city block. Software evaluates the video frame by frame to determine the shape and location of every object. The data results in a three-dimensional grid of force fields for each structure. Using a haptic interface device, a blind person can feel these forces and, along with audio cues, get a much better feel of a city’s or building’s layout.

Virtual Braille Display:

The Virtual Braille Display (VBD) project was created to investigate the possibility of using the lateral skin stretch technology of the Stress tactile display for Braille. The project was initially conducted at VisuAide inc. and is now being continued in McGill’s Haptics Laboratory.

2) Gaming Application:

- Casino gaming experience

- Allows users to feel sensations that intuitively guide them through operation or provide a more realistic and immersive experience

- Add richness to the experience by giving people the option to touch as well as use their sight and hearing

- Compensate for the noisy casino environment where sight and sound aren’t giving adequate feedback

- Synchronize haptic feedback with sound and graphical images to create a more immersive, multisensory experience

2. Gaming Wheel:

- Use Immersion force feedback to recreate bumps, crashes, and traction loss with jaw-dropping realism

- Let users feel every inch of the road for maximum control and the ultimate racing experience

- Provide 900-degree wheel rotation and force feedback with exceptional responsiveness to game action, without dead zones or lag time

3. Game Pad:

- Distinctive Saitek styling

- Immersion’s TouchSense Rumble technology for super realistic responses

- Advanced analog and digital controls for hours of fatigue-free gaming

- 4-position digital throttle with LED indicators

- 8-way D-Pad 2 shoulder triggers

3) AUTOMOTIVE:

Today’s new car buyers want separate climate controls for the driver and front seat passenger, triple-zone rear A/C, and separate controls in the rear. The problem is that the more sophisticated the climate control system, the more complex it can be to operate. Many motorists don’t fully understand their systems.

Touch Guides and Informs. Haptics can help make climate control systems more intuitive to operate. Research shows that touch feedback can convey significant quantities of information and use does not need to be confined to simple notifications.

Touch Makes It Easier. The climate control system may be complex, but touch feedback can make it seem easier. Human touch reaction is immediate, so receiving information this way improves user accuracy and speed therefore reduces distractions. With touch feedback guiding control settings, users may not even need to look at the controls, which further improves safety. Research also shows that using the touch channel for information reduces complication and stress.

Haptics Makes It Distinct and Memorable TouchSense® high-fidelity haptic feedback helps users distinguish and remember tactile cues and what they mean in the context of operation.

All sorts of digital switches and onscreen buttons can supply distinct sensations to allow greater discrimination for users and more intuitive access to climate control features and functions.

4) CONSUMER ELECTRONICS

CUE RADIO

The cue Radio is one of the best-in-class table radio. It provides a haptic response that intuitively communicates station signal strength through the tuner knob. It features “touch sensitive” tuning which is the tactile feedback mechanism. The iPod mode, gives users feel touch feedback as they roll over menu choices for artist, album, and genre. It tells the users when they’ve reached the end of a menu by using haptics to stop the dial from turning further. It is a very stylish and practical choice for consumers and its tactile feedback concept is very intuitive

5) VIRTUAL EDUCATION:

Research indicates that a considerable portion of people are kinesthetic or tactile learners—they understand better and remember more when education involves movement and touch. Because formal education has traditionally focused on visual (reading) and auditory (hearing) learning, these learners have been at a disadvantage. Haptics opens the door to an entirely different learning method and style, one that for many students provides the best opportunity to learn. Moreover, even for visual and auditory learners, haptics can improve learning. For a broad range of subject matter, incorporating sensory data and feedback allows for a richer understanding of the concepts at hand.

Haptics tools are used in a variety of educational settings, both to teach concepts and to train students in specific techniques. Some faculties employ haptic devices to teach physics, for example, giving students a virtual environment in which they can manipulate and experience the physical properties of objects and the forces that act on them. Such devices allow students to interact with experiments that demonstrate gravity, friction, momentum, and other forces. In subjects such as biology and chemistry, haptic devices create virtual models of molecules and other microscopic structures that students can manipulate. In this way, students can “feel” the surfaces of B cells and antigens, for example, testing how they fit together and developing a deeper understanding of how a healthy immune system functions.

Many disciplines depend on the execution of physical techniques with a high degree of precision and dexterity, and instruction in these fields can take advantage of haptic technology to help students hone those skills. Medical students can use haptic devices to develop a sense of what it feels like to give an epidural injection, perform laparoscopic surgical procedures, use dental or orthopedic drills, or any number of other highly tactile techniques. Such simulators give users the opportunity to develop a tactile sense of the structures, organs, and tissues of the body. Flight simulators combine visual and auditory elements with haptic technology, including resistance and vibrations in the controls, allowing student pilots to experience the kinds of sensations they will feel when flying real planes.

ADVANTAGES

- Communication is centered through touch and the digital world can behave like the real world.

- Working time is reduced since objects can be captured, manipulated, modified and rescaled digitally.

- Medical field simulators allow would be surgeons to practice digitally, gaining confidence in the procedure before working on breathing patients.

- With haptic hardware and software, the designer can maneuver the part and feel the result, as if he/she were handling the physical object.

DISADVANTAGES

- The precision of touch requires a lot of advance design. With only a sense of touch, haptic interfaces cannot deliver warnings.

- Haptics applications can be extremely complex, requiring highly specialized hardware and considerable processing power.

- The complexity also means that many haptics projects rely on fixed installations of equipment and are not easily portable.

- Debugging issues—these are complicated since they involve real-time data analysis.

- As the objects being manipulated in haptics are virtual, a compelling interaction with the device requires that all of the physical properties and forces involved be programmed into the application. As a result, costs for haptics projects can be considerable.

CONCLUSION

Development and refining of various kinds of haptic interfaces will continue, providing more and increasingly lifelike interactions with virtual objects and environments. Researchers will continue to investigate possible avenues for haptics to complement real experiences. Advances in hardware will provide opportunities to produce haptic devices in smaller packages, and haptic technology will find its way into increasingly commonplace tools.

Additionally, consumer-grade haptic devices are starting to appear on the market. As access to haptics increases, usage patterns and preferences will inform best practices and applications—ultimately, users will decide which activities are appropriately represented through haptics and which are perhaps better left in the real world.

Haptic is the future for online computing and e-commerce, it will enhance the shopper experience and help online shoppers to feel the merchandise without leaving their home. Because of the increasing applications of haptics, the cost of the haptic devices will drop in future. This will be one of the major reasons for commercializing haptics. With many new haptic devices being sold to industrial companies, haptics will soon be a part of a person’s normal computer interaction.

REFERENCES